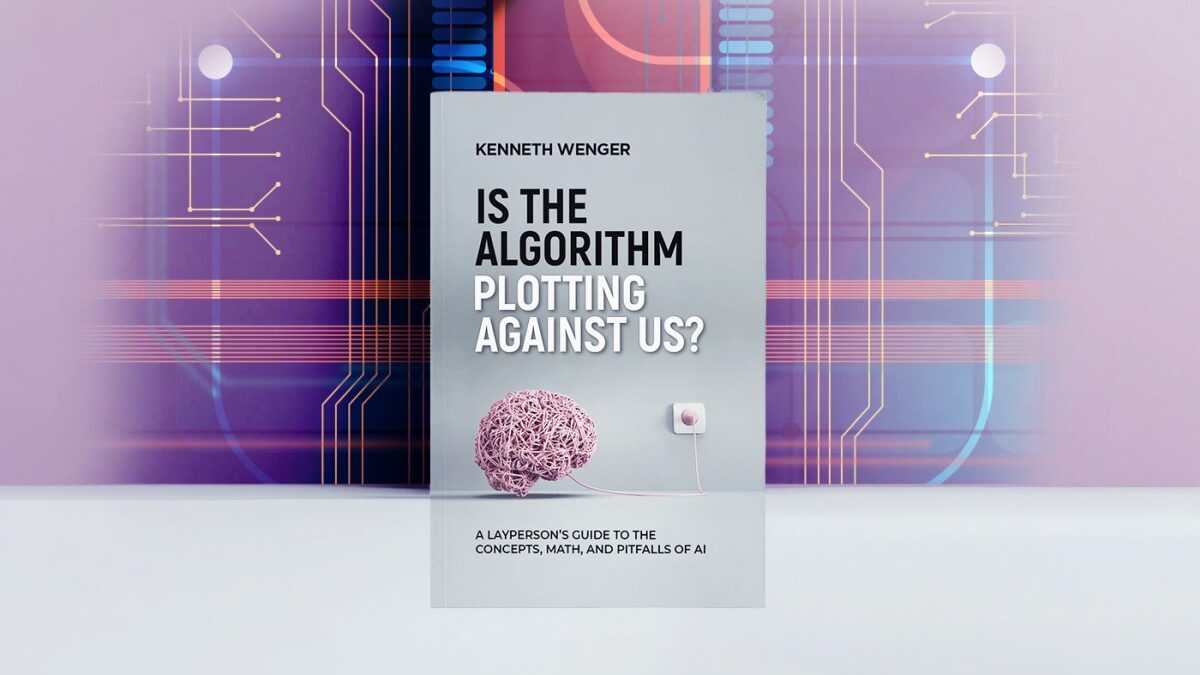

Is the Algorithm Plotting Against Us? by Kenneth Wenger

A Layperson's Guide to the Concepts, Math, and Pitfalls of AI

Artificial intelligence is everywhere―it’s in our houses and phones and cars. AI makes decisions about what we should buy, watch, and read, and it won’t be long before AI’s in our hospitals, combing through our records. Maybe soon it will even be deciding who’s innocent, and who goes to jail… But most of us don’t understand how AI works. We hardly know what it is.

In Is the Algorithm Plotting Against Us?, AI expert Kenneth Wenger deftly explains the complexity at AI’s heart, demonstrating its potential and exposing its shortfalls. Wenger empowers readers to answer the question―What exactly is AI?―at a time when its hold on tech, society, and our imagination is only getting stronger.

Amazon workingfires Author's Amazon Page

Excerpt from Is the Algorithm Plotting Against Us? © Copyright 2023 Kenneth Wenger

INTRODUCTION: LIVING WITH LIONS

Some days it feels like the whole world just can’t stop talking about artificial intelligence, or AI. Some of it seems good and exciting, like self-driving cars. We can already see cars maneuvering in certain situations with little human interaction; it won’t be long before the driving experience is all but automated. Some of it seems straight out of Star Trek or an Arthur C. Clarke novel. Neuralink, a company cofounded by Elon Musk, promises futuristic chips that can be inserted into your brain and interface with your neural connections, initially to help persons with disabilities gain lost functionality and eventually to serve as a much faster interface with our digital world. Imagine surfing the web (Does anyone still say “surfing the web”?) without needing a keyboard. Then, there are voice assistants like Amazon’s Alexa and Apple’s Siri that can understand our endless queries and respond in impressive ways. All these advances might have you wondering how any of this is possible. What is the source of knowledge in these machines, and how do they actually work?

Darker and more ominous undercurrents, however, have also sparked interest in AI. If you haven’t watched the Netflix series Black Mirror, you should. It’s great, and it’s also a reminder—or maybe a warning—of what can happen when we lose control of our technology. Maybe you’re reading this book to find out exactly how terrified you should be of AI. Maybe you’ve read news articles or seen Instagram videos about companies likes Boston Dynamics creating animal like robots that can maneuver in complex environments, perform such sophisticated tasks as opening doors, and communicate with other robots to achieve a common goal. Maybe you’ve thought, “Oh my God, it’s too late. We are all going to die”.

Whether your interest in AI is driven by hope and excitement or gloom and despair, you want to know if this book is for you and what you will get out of reading it. So let’s get right to it.

The purpose of this book is, first and foremost, to explain how AI works at a level of detail that makes these algorithms accessible to a general audience. You do not need a technical background to understand this book; all that is required is a sense of curiosity and a willingness to consider complex subjects. After reading the book, you should have a good handle on the capabilities of state-of-the-art AI algorithms so that you can evaluate and gauge your response to this technology from an informed place.

A lion in the wild can be dangerous to humans. But knowing where they live, when they hunt, and their physiological capabilities can help us modulate our response and behavior. If we find ourselves on a safari in the African savanna, we should be alert. If we see a lion, we might want to stay in our vehicles and keep enough distance so that we can drive away if the lion decides to chase. We can do this because we know how fast our vehicle can travel, and we know the speed of a lion. We also know that lions can run, but they can’t fly. This knowledge helps us gauge the level of readiness we ought to have in the African savanna. The Maasai of Tanzania and Kenya understand this better than anyone. They have coexisted with lions for millennia. They use knowledge developed over generations to keep themselves and their herds safe from lions. Once, long ago, they used their skills to track and successfully hunt lions in traditional rites of passage. Now, increasingly, they use those same tracking skills to protect and help preserve the king of the jungle. The Maasai do not fear the lion; instead, they have learned to understand it.

On the other hand, if we find ourselves in the jungles of South America, we know that we need not fear lions—other predators, sure, but not lions, because there are no lions outside of Africa. In other words, understanding the lion’s capabilities, limitations, and domain helps us understand when we must worry about lions and when we can be certain that we are safe from them. That is the goal of this book (not to discuss lions—though we will come back to them in a later discussion): to help us understand the capabilities, limitations, and domains of current AI technology.

First, we need to define what we mean by AI in this book. When we say “AI,” we are referring to a specific class of AI algorithms: artificial neural networks. Readers may have heard of deep learning used in conjunction with AI these days as well. This term often describes neural network models with multiple layers of artificial neurons. We come back to this relationship in chapter 1 and discuss the significance of each layer in a neural network model. It is important to note, however, that AI is a broad discipline in computer science; it spans many areas of research, and countless algorithms fall under this umbrella term. We are aware of the umbrage taken by purists—and those inclined to proper definitions, terminology, correctness, and so on—when we use the terms AI and neural networks interchangeably in this book, but the reality is that most people ignore this distinction often enough that those terms are regularly used interchangeably in informal contexts. Before proceeding, let’s make a promise to eternally remember that AI is a broad discipline, and neural networks are a class of algorithms belonging to that discipline. Having promised to remember the distinction, we can now do as we please.

Artificial neural networks are by far the most popular and successful AI algorithm in use today. They are currently the driving force behind advances in robotics, self-driving cars, Amazon’s Alexa, and Google Assistant. In the pharmaceutical industry, it is expected that neural networks will make significant contributions to the discovery of molecules that can be synthesized to treat debilitating diseases like Parkinson’s, multiple sclerosis, and many others. At this point, it seems like there is no problem big enough or abstract enough that artificial neural networks cannot handle it. The corollary to this is that their unprecedented level of success has also garnered for them a certain level of distrust, or at least trepidation. If it can respond to me and understand my queries so well, what else can it do? What is it thinking?

Popular beliefs, science fiction, and media sensationalism have conditioned us to distrust AI systems. That general distrust has taken on one most prominent and particular flavor: these machines will eventually become self-aware, wake from their eternal slumber, and kill us. The problem with such concerns is that they serve as a bit of a red herring. Regardless of whether we will eventually have to contend with self-aware machines, it’s certainly not the only issue we should be discussing at this time. Presently, science does not have a complete theory of consciousness. We do not understand how consciousness arose in ourselves. We don’t even have a definition of consciousness that everyone agrees with. Recent advances in AI—mixed with a lack of understanding of how AI systems work and a tendency on the part of media companies to generate revenue by stoking fears—contribute to a general sense that conscious artificial systems are just around the corner, ready to enslave us. Society may have to grapple with conscious AI in a distant future, but before we get there, plenty of more urgent matters warrant discussion: What happens when AI is used in ad campaigns? What about by law enforcement? Can AI algorithms solve a problem in unique ways, where its measure of “success” differs from that of its human designer? This last example is the well-known alignment problem. Suppose you ask a robot to get rid of the CO2 in the atmosphere to combat climate change, and it finds that the best way to achieve this goal is to get rid of the human population. The robot did not consciously decide to get rid of humans. The situation simply describes an optimization problem gone wrong (for us!). Understanding when our current AI technology is being applied and the potential unintended consequences of its misuse are real-world, present-day issues that get pushed aside because they are not as exciting as the thought of berserk smart blenders chasing us around the house.

In this book, we first address the functioning of neural network algorithms. We explain what it is that makes them tick and how they manage to work at all. Then, we critically examine their limitations, the rights and trust we have already granted them, and their potential for causing signifcant harm to our society, in some cases, if we are not careful. But let’s be clear: this harm is completely self-inflicted. The algorithms are not yet “out to get us”; we just don’t always use them in healthy and productive ways.

Why should you get involved in this discussion if you are not a scientist? Because each of us can influence our collective future. Technology advances with research, and research is fueled by money. Institutions get billions of dollars in government grants for research into different areas. The grants are made possible by taxpayer money— your tax money. You have the ability to influence policy every time you go out and vote. The public can decide what areas of research should get more attention. But how can you make an informed decision without being, well, informed? When it comes to artificial intelligence, there is a lot of speculation, often in the media, about the dangers and the capabilities of AI. You will be much better served by understanding how these systems work and what we realistically need to worry about rather than making an emotional decision based on uninformed sensationalist ideas. This way, you can at least arrive at a decision by way of a thought process. If we don’t have a thought process to ground our decision-making, as often happens, we get disproportionate—typically radical and extreme—responses driven by fear. This happened when stem cell research was all but banned in many countries out of fears over possible misuse: critics concocted specious moralistic arguments and completely disregarded the ethical dilemma of abandoning research that could contribute significant insight into terrible diseases like cancer, AIDS, and degenerative muscle disorders.

For many of us, AI immediately conjures up the specter of Skynet— an intelligence created for the purpose of protecting national security that inevitably gains consciousness and wreaks havoc on humanity— and its cyborg assassin, the Terminator T-800. But our fear of AI might derive from a more primitive and innate response to a perceived threat, a response that predates the development of technologies whose imagined descendants populate movies and science fiction novels—that is, the fear of the unknown. More specifically, that fear has often manifested as a fear of the Other: a creeping, gut-borne feeling characterized by increasing and alarming suspicions of newcomers, outsiders, or anyone beyond our circles of intimacy or relationality. What are these circles? Interestingly, we construct different ones depending on certain rules of engagement. First, there is the family circle, where we extend the most trust. Beyond this, we have friends and more distant relatives. Even our respective countries form a certain circle of trust, if not comfort; we typically feel more connected to our compatriots than to people from other parts of the world. We notice this when we travel and meet a fellow expat. Immediately we feel a connection to them even though we know very little about them; we just know that they belong inside one of our circles.

In its beneficial, or at least benign, form, this distinction between insiders and outsiders can foster a sense of community. In its malignant form, as the twentieth century showed in unconstrained horror, it leads to xenophobia and fascism (and unfortunately such virulent nationalism has been on the rise again around the world). So far, we are just talking about relationships between people. What then can we expect from our relationships with other beings, including the artificial kind? It seems natural that we should be suspicious of artificial intelligence. In some ways, it is the ultimate threat: by definition an outsider, not being human or natural, yet possessing the crown jewel of all qualities that separate us from mere animals—intelligence. Intelligence has given our species the superpower to change our planet and dominate all other living things on it. When looking at the full scope of what we have done with our intelligence—taking in our remarkable creations in the arts and sciences, our developments and advances living as social beings—in some very specific and clear ways, it has not served the natural world well: destroying forests, polluting oceans and waterways, wiping out entire species of plants and animals, and threatening those that are still around. It is no wonder that we should be outright mortified of a being, or entity, that shares very little with us yet finds itself in possession of our ultimate weapon.

It seems that our primary concern, then, is with the form and extent of so-called intelligence in artificial systems. Over the course of this book, we systematically describe the mechanisms that are responsible for the advances we see today. Once we finally understand the machinery behind AI, we should feel empowered to take charge of our technology instead of being fearful of some synthetic omnipotence. At the very least, establishing a foundational understanding will give us the tools to judge what we should accept and what we should constrain when it comes to artificial systems that are now making decisions that aspect our lives. My hope is that this book will not leave you feeling afraid but rather informed and, therefore, empowered.

The rest of the book comprises four main chapters and a short conclusion. In chapter 1, we discuss the first artificial neuron created by humans and the history and motivation that led to its creation. Along the way, we meet individuals who were driven purely by curiosity— that insatiable need to understand everything about our universe, from its physical laws to the phenotypic expressions of those laws. We examine how the humble artificial neuron evolved into a network of artificial neurons powerful enough to solve problems that were once considered computationally intractable, such as image recognition and natural language processing (e.g., understanding speech and writing, translating between languages). Wherever possible, we point out similarities between artificial and biological neurons, similarities that inspired the early work in artificial intelligence. Importantly, we unpack the multilayer perceptron—the first artificial neural network architecture ever created and still a fundamental building block of most state-of-the-art architectures in use today.

Chapter 2 is all about vision. Here we discuss the problem that computer vision presents. Today, we take for granted that cameras and gadgets can track our faces and follow our movements. Even relatively inexpensive drones can be programmed to track and film us as we ski down a mountain. We search images for content using a variety of applications and tools. These tools can take a search query from us (something like “pictures of red cars in autumn”), analyze images for content (that shows red cars in autumn), and then return a list of images matching these search criteria. In the medical domain, similar applications are capable of searching biopsy scans for anomalous tissues that might be signs of disease. How is any of this possible? If you keep up with technology, none of this is surprising or even impressive anymore. But computer vision was once considered among the most elusive subjects to tackle in computer science. In chapter 2, we see why computer vision is such a fundamentally difficult problem to approach, and we discuss how artificial neural networks have all but solved this problem. And finally, we introduce the convolutional neural network, which has become the de facto architecture for computer vision. In fact, together with the multilayer perceptron, they form a set of fundamental building blocks used in most neural network architectures today. Throughout, we continue to note the similarities between artificial and biological systems and, wherever possible, describe the biological system as the inspiration for and intuition behind the development of the artificial one.

The overarching goal of the first two chapters, then, is to specify what neural networks look like and how they operate. Providing the layout of the neural networks (in graphic and linguistic form), we describe each layer and show how each neuron in one layer is connected to the neurons in subsequent layers. We also detail the types of operations taking place at each neuron. After reading chapters 1 and 2, you should be able to answer—at least at a conversational level— what neural networks are and how information is processed from the input to the output.

In chapter 3, we dive deeper into the how questions. This is where we look at what gives artificial neural networks any right to work and elucidate the mathematical intuitions that govern most of them. We describe the training processes that enable neural networks to perform certain tasks. This information allows us to understand their limitations and start to grasp the current state of artificial intelligence. We explore whether these systems are capable of conscious thought—whatever that means to you—or whether they are enacting a more primitive method of information processing.

In the final main chapter, we take a step back from our pursuit of understanding neural networks in specific and operational terms. Instead, we put to good use the information we learned in the preceding chapters and attempt a bit of introspection. Using our newfound knowledge, we again ask the question of how dangerous artificial intelligence is and proceed to answer the question by evaluating levels of threat. We discuss areas (the judicial system, advertising) where artificial intelligence poses significant risk—without requiring the technological leap of gaining consciousness—and examine industries (automotive, health care, warehousing) where automation promises an improvement over present-day standards. More importantly, in this chapter we evaluate the current AI revolution against previous technological revolutions and attempt to learn from the past to understand our current moral and practical obligations.

Having defused the panic about a robot takeover, in the conclusion, we provide a simple test for identifying classes of problems that are amenable to AI-based solutions and classes of problems that should remain in human control for the foreseeable future. The test provides a path to action by asking a set of questions for any new class of problems we may want to solve using automation. This set of questions enables us to reflect on the problem to understand whether the solution requires nuanced and difficult moral considerations or simply a set of specific rules to follow. Armed with these simple questions, we can then take control of deploying our AI tools in responsible ways.

So let’s get to it. The path to learning whether Alexa is conspiring with Siri begins with chapter 1—including a brief diversion into what amounts to present-day technology’s ancient past.

My profession is online marketing and development (10+ years experience), check my latest mobile app called Upcoming or my Chrome extensions for ChatGPT. But my real passion is reading books both fiction and non-fiction. I have several favorite authors like James Redfield or Daniel Keyes. If I read a book I always want to find the best part of it, every book has its unique value.